Everyone is multi-tenanting AI coding tools now

25 August 2025

Like many I was also hit by tightening rate limits on Cursor. Like many, I also found out at some point this year, that Claude Code is the best coding tool. And, like many, I also figured out that Claude Code is really expensive.... Oh, yeah and got rate limited on that as well. So, like many (last time I promise), I also started looking around.

What I ended up doing was getting Kilo Code, on top of my Cursor and Claude Code subscriptions. Now my workflow consists of:

- Background agents: Cursor

- Asking questions and making simple features: Cursor, and when it runs out switch to Kilo

- The hard stuff: Claude Code

- Code review: Coderabbit

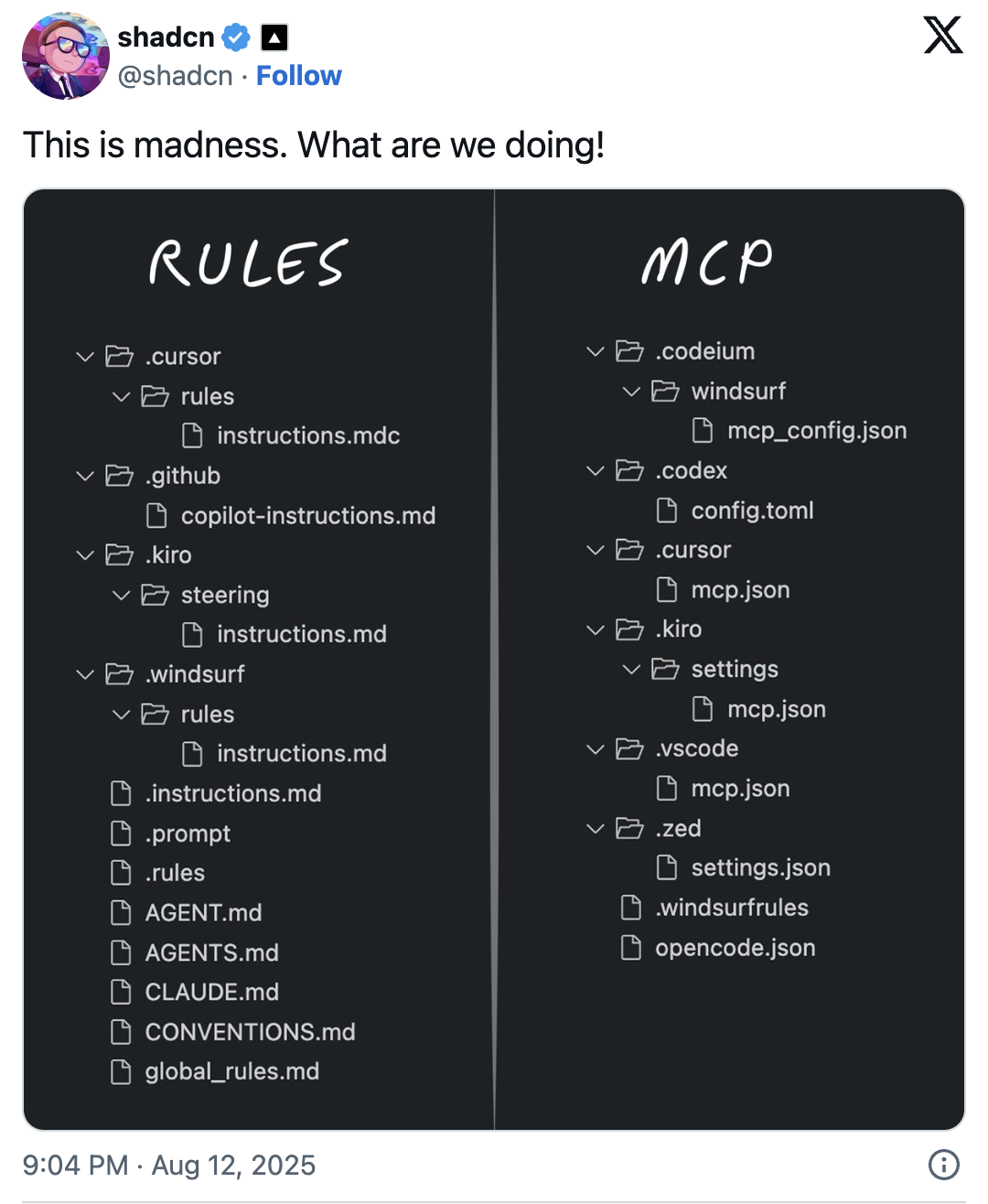

It's a pretty good setup I think, but it does mean that I'm constantly switching tools. And it also means that my codebases are peppered with rules and mcp.json files for the various tools. Shadcn on X.com posted a screenshot recently summarizing the situation we're now in.

Source: Original tweet

This got me thinking about where we are, and where we are going with AI Coding.

Models and prompts are more important than the tools

Eventhough Claude Code is noticeably more capable than other tools, it still fails in the same ways. In fact, all of them still fail in exactly the same ways I wrote about almost a year ago.

Kilo and Cursor are rapidly assimilating features from Claude Code, like using tools to search the codebase and making todo lists. Those product improvements help, certainly, but none of them help quite as much than switching from Claude 4 Sonnet to Claude 4.1 Opus.

Moreover, with really good agent.md and rules files, you can definitely get much the same coding performance out of Kilo or Cursor that you get from Claude Code. So the moment that a new model comes out that is significantly better, or the moment that Anthropic manages to deliver Opus for 1/10th the price, THAT is what will give AI coding the next step-change improvement. Everyone will remember the product got suddenly better, and some may attribute that to whichever tool they happen to be using ("wow dude Cursor just got way better!"), but it will be clear quickly enough that it is the model that did it. The same situation applies to all of the web based coding tools: Loveable, Bolt, Replit, V0 and 100 others.

It is hard to say what Cursor's moat really is

Certainly, Cursor has the most brand awareness. That is a bit of a moat, but not a very deep one. In my personal opinion Background Agents are a really good product, and now the main reason for me to still pay Cursor. But what else? Using Kilo (or Augmentcode, or Roo, or Windsurf, or Cline, or even Copilot) in VSCode is almost exactly the same experience.

Thanks to Github, it's effortless to keep everything in sync. I've even found myself running Cursor and Kilo (in VSCode) at the same time, working on different isolated parts of Magicdoor.ai, or work on Magicdoor in one and on this website in the other. With all of them using the same exact models, and fundamentally having the same UX (chat + context engineering), switching costs are zero.

People are starting to integrate these rules

I came across an open-source codebase, and the contents of the Claude.md file (for Claude Code) simply said: "AGENTS.MD" which presumably would lead Claude to read the other file that contained all the actual rules. I'm sure people will come up with much more advanced ways to manage this, but I guess this is a good start. For me it all points to commoditization, although I personally do think that LLMs will continue to have different 'personalities' and subtle differences in behavior that will drive some model lock-in.