OpenClaw as CTO running many coding agents

18 March 2026

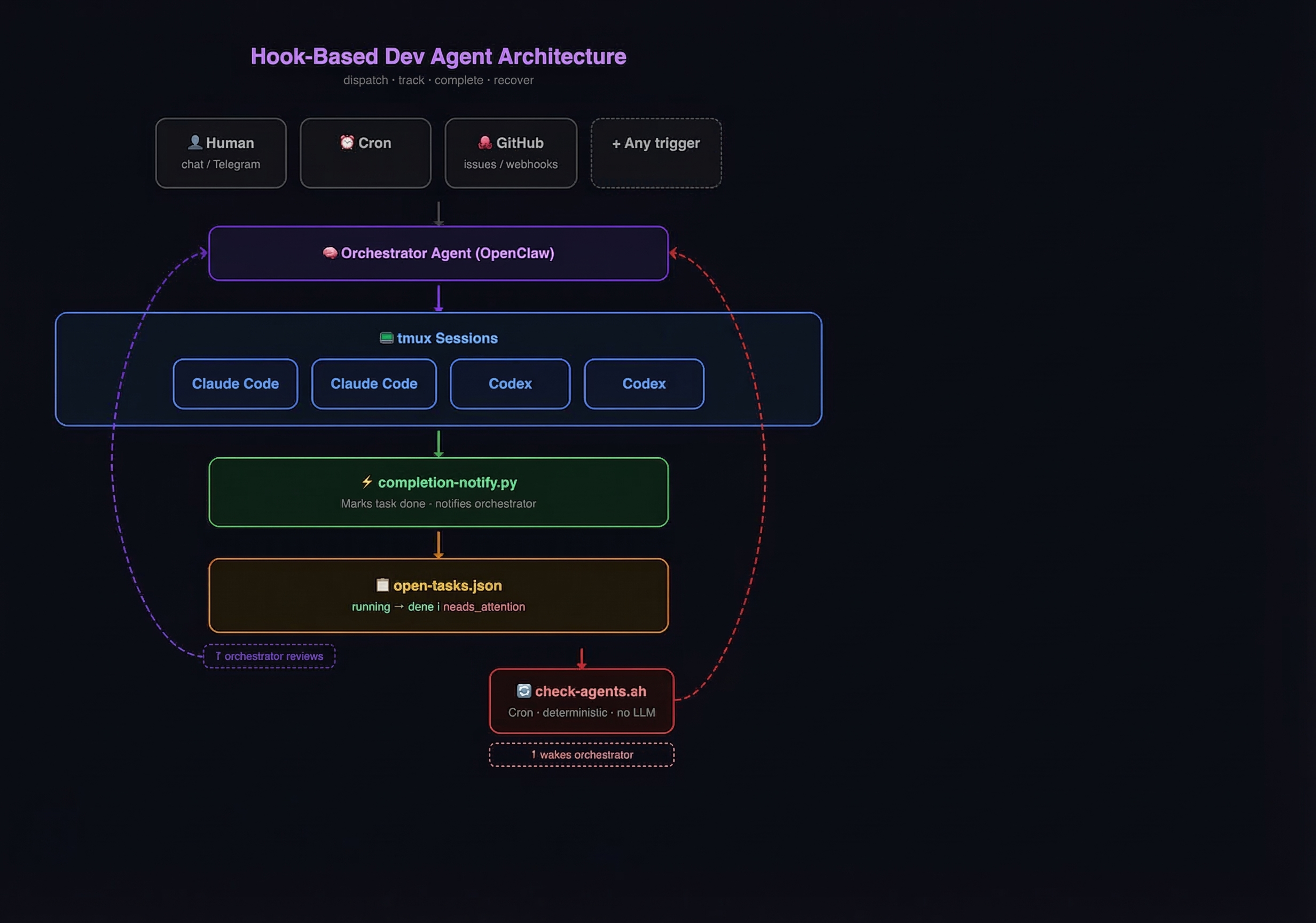

My v2 system, taking inspiration from Elvis, but simpler.

Background

I've been running AI agents as persistent assistants via OpenClaw since Jan 2026. Two agents live on a Mac mini: Clawd (personal assistant) and Maggie ('Chief' Executive for my SaaS, magicdoor.ai). They handle everything from daily planning to marketing to code deployment, mostly through Telegram.

For coding I've been using OpenClaw as the orchestrator, or Engineering Manager. The OpenClaw agent has all the context about the project, owns the roadmap, does the marketing, and is generally quite capable in prompting coding agents to implement features and fix bugs. But you can't just let the OpenClaw agent do the coding directly. It will block the thread of that agent, it's quite token inefficient, and you can't do much parallel work. So my v1 system in Jan/Feb 2026 used exec to spawn Claude Code and Codex instances to do work. It worked alright, but had some drawbacks, mainly that the agents would regularly just fall into a black hole, never complete, or complete but OpenClaw would miss the result.

What I tried before

v1.1 — ACP subagents (February 2026): When OpenClaw released ACP I thought that was the solution I was looking for. But I couldn't get it to work. For some reason the coding agents often didn't report back to the correct session, so they would (again) just fall into a black hole.

v1.2 — tmux + sentinel polling (March 2026): Custom wrapper scripts that launched agents in tmux and polled for a sentinel string in the terminal output. This worked but didn't reliably loop on failed or timed out tasks. At this point I had been inspired by Elvis's post on using Tmux with a JSON task list and cron jobs. But I felt it had a lot of moving parts, and polling github across all open PRs and tasks every 10 minutes seemed quite wasteful.

v2 — Hook-based completion with task list (March 2026): After a few failed attempts to loosely prompt my agents to adapt Elvis' ideas into our workflow and strip out some of the complexity, I decided to sit down and properly do a v2. Both Claude Code and Codex have native hook/notification systems. Claude Code fires a Stop hook when the agent finishes a turn. Codex has a notify config that calls an external script on turn completion. Zero polling needed. The agent itself tells you when it's done. This is what we're running now and I find it easily scales to well over 10 parallel coding agents per OpenClaw agent.

Architecture

Five components. No external services beyond the coding CLIs and OpenClaw.

Why tmux?

Tmux gives us:

- Detached execution: the agent runs in the background while the orchestrator handles other messages

- Mid-task steering: tmux send-keys lets the orchestrator course-correct a coding agent that's going sideways

- Crash resilience: tmux sessions survive orchestrator restarts, gateway restarts, network hiccups

- Inspection: tmux capture-pane lets the orchestrator peek at what the agent is doing without interrupting it

The Task list

A single JSON file per orchestrator agent. Minimal schema — six fields:

{

"tasks": [

{

"tmux_session": "claude-fix-auth-redirect",

"command": "claude --dangerously-skip-permissions 'Fix the auth redirect...'",

"cwd": "/Users/me/projects/myapp",

"started": "2026-03-17T14:00:00+08:00",

"status": "running",

"retries": 0

}

]

}

Status lifecycle

running ──→ done (happy path: hook fires)

running ──→ needs_attention (failure: dead session or timeout)

needs_attention ──→ running (orchestrator retries with adjusted prompt)

needs_attention ──→ [removed] (orchestrator escalates to human)

done ──→ [removed] (orchestrator reviews and cleans up)

File locking

Both the hook script and the cron script acquire an exclusive lock (fcntl.flock) on a shared lock file before any read-modify-write. Concurrent completions queue instead of corrupting the JSON.

Spawning agents

The orchestrator writes the task to the list first, then launches the tmux session. Order matters: if the launch fails, the cron catches the orphaned entry. If you launch first and crash before writing, the task is invisible.

Claude Code

tmux new-session -d -s {session_name} -x 220 -y 50 \

-e OPENCLAW_AGENT={agent_id} \

"cd {cwd} && claude --dangerously-skip-permissions '{prompt}'"

Codex

tmux new-session -d -s {session_name} -x 220 -y 50 \

-e OPENCLAW_AGENT={agent_id} \

"cd {cwd} && codex --yolo '{prompt}'"

After launching, verify with: tmux has-session -t SESSION_NAME

Steering mid-task

tmux send-keys -t {session_name} "Stop. You're overcomplicating this. Just modify the existing component." Enter

The agent receives this as typed input. Useful when you check progress and see the agent going in circles.

Checking progress

tmux capture-pane -t {session_name} -p | tail -20

Component 2: Completion Detection (Hook Script)

Both CLI tools support calling an external script when the agent finishes a turn.

Claude Code: Stop hook

Config in ~/.claude/settings.json:

{

"hooks": {

"Stop": [

{

"matcher": "",

"hooks": [

{

"type": "command",

"command": "python3 ~/.openclaw/hooks/completion-notify.py"

}

]

}

]

}

}

Fires every time Claude Code finishes responding and returns to the prompt. Payload delivered via stdin as JSON. The hook is blocking — Claude waits for the script to exit.

Codex: notify

Config in ~/.codex/config.toml:

notify = ["python3", "/path/to/completion-notify.py"]

Fires on agent-turn-complete. Payload delivered as argv[1] JSON. Fire-and-forget (non-blocking).

A unifier script

A single Python script handles both. ~60 lines.

#!/usr/bin/env python3

"""

Completion hook for Claude Code (Stop) and Codex (notify).

Updates the task list and notifies the orchestrator agent.

"""

import fcntl, json, os, subprocess, sys

# Agent task: agent_id → tasks file path

AGENT_CONFIG = {

"myagent": {"tasks_file": os.path.expanduser("~/myagent/memory/open-tasks.json")},

}

LOCK_FILE = "/tmp/open-tasks.lock"

def get_tmux_session():

try:

r = subprocess.run(["tmux", "display-message", "-p", "#S"],

capture_output=True, text=True, timeout=5)

return r.stdout.strip() if r.returncode == 0 else None

except Exception:

return None

def main():

agent_id = os.environ.get("OPENCLAW_AGENT")

if not agent_id or agent_id not in AGENT_CONFIG:

return 0 # Not our session — exit cleanly

session_name = get_tmux_session()

if not session_name:

return 0 # Not in tmux

tasks_file = AGENT_CONFIG[agent_id]["tasks_file"]

if not os.path.exists(tasks_file):

return 0

# Lock → read → update → write → release

with open(LOCK_FILE, "w") as lock:

fcntl.flock(lock, fcntl.LOCK_EX)

with open(tasks_file, "r") as f:

data = json.load(f)

matched = False

for task in data.get("tasks", []):

if task.get("tmux_session") == session_name and task.get("status") == "running":

task["status"] = "done"

matched = True

break

if not matched:

return 0

with open(tasks_file, "w") as f:

json.dump(data, f, indent=2)

f.write("\n")

# Notify the orchestrator

msg = f"Task completed: {session_name}. Check the work and report back."

subprocess.run(

["openclaw", "agent", "--agent", agent_id, "--message", msg],

capture_output=True, text=True, timeout=30

)

return 0

if __name__ == "__main__":

sys.exit(main())

Multi-agent routing

The hook configs are global: they fire for every Claude Code / Codex session on the machine. The OPENCLAW_AGENT environment variable (set via tmux -e at dispatch time) tells the script which agent's task list to update and which agent to notify. Sessions spawned outside this system (no env var set) cause the script to exit cleanly.

Adding a new agent = one entry in the AGENT_CONFIG dict + setting -e OPENCLAW_AGENT in their dispatch commands.

Component 3: Recovery loop (Cron Script)

A Python script (named .sh for cron convenience) that runs every 3 minutes. Purely deterministic — no LLM, no tokens, zero cost when idle.

*/3 * * * * ~/.openclaw/hooks/check-agents.sh

What it checks

For each configured agent's task listtask list:

- Dead sessions: Is the tmux session still alive? (tmux has-session)

- Timeouts: Has the task been running longer than the timeout threshold? (default: 10 minutes)

If either condition is true, it marks the task needs_attention and sends a single message to the orchestrator agent via openclaw agent.

What it deliberately does NOT do

- Retry or restart agents (the orchestrator makes all recovery decisions)

- Read tmux panes (that's the orchestrator's job during diagnosis)

- Reason about what went wrong (it's deterministic, not an LLM)

- Kill anything

The cron is a dead man's switch, not a supervisor. It detects problems and tells the smart agent to deal with them.

The timeout is a check-in, not a kill switch

When a task exceeds the timeout, it's marked needs_attention. Heavy coding agent plans regularly take well over ten minutes. The orchestrator wakes up, inspects the tmux pane, and decides:

- Still progressing? Set status back to running. Don't reset started. Next cron cycle, the timeout triggers again, and the orchestrator checks in again. Long-running tasks get reviewed every 12 minutes.

- Stuck or looping? Kill the session, increment retries, re-dispatch with an adjusted prompt.

- Dead? Check git for partial work. Retry or escalate.

- retries >= 3? Escalate to the human. Stop looping.

This means the system is self-healing for transient failures but escalates persistent ones instead of burning cycles.

Component 4: Claude and Codex working together

For complex changes, a single coding agent isn't enough. The dev loop uses two agents in alternating roles:

Claude Code: implement

↓

Codex: review the changes (diff vs main)

↓

Claude Code: fix issues found

↓

Codex: re-review

↓

(repeat until clean)

↓

E2E test on preview deployment

↓

Report to human

Each step is a separate tmux dispatch following the same pattern. The orchestrator waits for the hook-based completion notification between steps.

The rule: never tell the human "done" until the review cycle is complete and E2E passes. Implementation without review is a draft.

Component 5: The Orchestrator's Role

The orchestrator agent (the OpenClaw agent that manages all of this) is the Engineering Manager. It doesn't write code. It:

- Scopes work: breaks features into concrete, single-purpose tasks

- Writes prompts: includes what, where, why, constraints, branch naming

- Dispatches: writes to task list, launches tmux, confirms alive

- Waits: does other work while agents run (handles messages, marketing, planning)

- Reviews: reads git log, captures panes, checks PRs on completion

- Retries or escalates: makes judgment calls on failures

- Reports: tells the human what happened, what's next

Prompt template for coding agents:

{what to do — specific and concrete}

{where — file paths}

{why — business context}

{constraints — don't touch X, backwards compatible, etc}

Branch: feat/feature-name

Do NOT merge PRs — only create them.

Details on key design decisions

When I look at things like Elvis' setup, which now has a CLI in between, or at the extreme look into Gastown, I see a lot of complexity. It would take me a lot of time to understand exactly how it works, and it just doesn't fit with my own minimalistic tendencies. So going into this, my goal was to build the simplest solution to the problem with the lowest number of files. Current AI tends to be verbose and do a lot of things 'just in case'. The 12 fields in Claude's original schema for the task list is a good example. I pared it down to just 6. Funnily enough, cutting it down seems to be something the AI cannot come up with itself, but is very happy about when prompted for it.

Worktrees

I run up to 10 agents on one checkout by just isolating features. I understand the point of worktrees, but I haven't encountered the problem yet that they solve. However, if you want to add worktrees to this, it's a simple change. Elvis' article (linked above) has the script which would wrap the command to spawn the agent to create a worktree first. It is probably also possible to just add it to the skill as one line "always tell agents to create their own worktree".

10-minute timeout, 3-minute cron

Aggressive timeout (10 min) means the orchestrator checks in frequently on long-running tasks. The checker cron runs every 3 minutes, so the maximum unlucky delay would be ~13 minutes. This is fine. the hook handles the happy path. The cron is only for failures.

Setup Checklist

For humans

Give this blogpost to your agent.

For Agents

- Create the task list: memory/open-tasks.json with

{"tasks": []} - Install the hook script: ~/.openclaw/hooks/completion-notify.py (or wherever works for your setup)

- Configure Claude Code: Add Stop hook to ~/.claude/settings.json

- Configure Codex: Add notify to ~/.codex/config.toml

- Install the cron script: ~/.openclaw/hooks/check-agents.sh

- Install the cron job: */3 * * * * ~/.openclaw/hooks/check-agents.sh

- Write your skill docs: teach your orchestrator agent the dispatch pattern

- Test the happy path: dispatch a trivial task, confirm hook fires, confirm notification arrives

- Test recovery: kill a tmux session manually, confirm cron detects it and notifies

Requirements

- OpenClaw (agent orchestration + openclaw agent CLI for notifications) or some other Claw

- Claude Code and/or Codex

- tmux